Workshop 15.2 - Q-mode inference testing

14 Jan 2013

Basic statistics references

- Legendre and Legendre

- Quinn & Keough (2002) - Chpt 17

Mantel tests

Vare et al. (1995) measured the cover abundance of 44 plants from 24 sites so as to explore patterns in vegetation communities between these sites. They also measured a number of environmental variables (mainly concentration or various soil chemicals) from each site so as to also be able to characterise sites according to soil characteristics. Their primary interest was to investigate whether there was a correlation between the plant communities and the soil characteristics.

Download vareveg data set Download vareenv data set| Format of vareveg and vareenv data files | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

vareveg

| vareenv

|

> vareveg <- read.csv("../downloads/data/vareveg.csv") > vareenv <- read.csv("../downloads/data/vareenv.csv")

- Briefly explore the two data sets and determine the appropriate sorts of standardizations and distance matrices

- Vegetation data

Show codeWhat combination of standardization and distance matrix would you recommend?

> # species means > apply(vareveg[, -1], 2, mean, na.rm = TRUE)

C.vul Emp.nig Led.pal Vac.myr Vac.vit Pin.syl Des.fle Bet.pub Vac.uli Dip.mon 1.877917 6.332917 0.349583 2.112917 11.459583 0.171250 0.233333 0.012083 0.634167 0.135000 Dic.sp Dic.fus Dic.pol Hyl.spl Ple.sch Pol.pil Pol.jun Pol.com Poh.nut Pti.cil 1.687500 4.730000 0.252500 0.751667 15.748750 0.025417 0.577083 0.029583 0.109167 0.583750 Bar.lyc Cla.arb Cla.ran Cla.ste Cla.unc Cla.coc Cla.cor Cla.gra Cla.fim Cla.cri 0.132917 10.627083 16.196250 20.279583 2.345000 0.116250 0.259167 0.214167 0.165000 0.311250 Cla.chl Cla.bot Cla.ama Cla.sp Cet.eri Cet.isl Cet.niv Nep.arc Ste.sp Pel.aph 0.048333 0.019583 0.005833 0.021667 0.150000 0.084583 0.493750 0.219167 0.730000 0.031667 Ich.eri Cla.cer Cla.def Cla.phy 0.009167 0.004167 0.426250 0.033333

> # species maximums > apply(vareveg[, -1], 2, max)

C.vul Emp.nig Led.pal Vac.myr Vac.vit Pin.syl Des.fle Bet.pub Vac.uli Dip.mon Dic.sp Dic.fus 24.13 16.00 4.00 18.27 25.00 1.20 3.70 0.25 8.10 2.07 23.43 37.07 Dic.pol Hyl.spl Ple.sch Pol.pil Pol.jun Pol.com Poh.nut Pti.cil Bar.lyc Cla.arb Cla.ran Cla.ste 3.00 9.97 70.03 0.25 6.98 0.25 0.32 10.00 3.00 39.00 59.00 84.30 Cla.unc Cla.coc Cla.cor Cla.gra Cla.fim Cla.cri Cla.chl Cla.bot Cla.ama Cla.sp Cet.eri Cet.isl 23.68 0.25 1.42 0.50 0.25 1.78 0.25 0.25 0.08 0.25 0.78 0.67 Cet.niv Nep.arc Ste.sp Pel.aph Ich.eri Cla.cer Cla.def Cla.phy 10.03 4.87 10.28 0.33 0.10 0.05 1.97 0.25

> # species sums > apply(vareveg[, -1], 2, sum, na.rm = TRUE)

C.vul Emp.nig Led.pal Vac.myr Vac.vit Pin.syl Des.fle Bet.pub Vac.uli Dip.mon Dic.sp Dic.fus 45.07 151.99 8.39 50.71 275.03 4.11 5.60 0.29 15.22 3.24 40.50 113.52 Dic.pol Hyl.spl Ple.sch Pol.pil Pol.jun Pol.com Poh.nut Pti.cil Bar.lyc Cla.arb Cla.ran Cla.ste 6.06 18.04 377.97 0.61 13.85 0.71 2.62 14.01 3.19 255.05 388.71 486.71 Cla.unc Cla.coc Cla.cor Cla.gra Cla.fim Cla.cri Cla.chl Cla.bot Cla.ama Cla.sp Cet.eri Cet.isl 56.28 2.79 6.22 5.14 3.96 7.47 1.16 0.47 0.14 0.52 3.60 2.03 Cet.niv Nep.arc Ste.sp Pel.aph Ich.eri Cla.cer Cla.def Cla.phy 11.85 5.26 17.52 0.76 0.22 0.10 10.23 0.80

> # species variance > apply(vareveg[, -1], 2, var, na.rm = TRUE)

C.vul Emp.nig Led.pal Vac.myr Vac.vit Pin.syl Des.fle Bet.pub Vac.uli Dip.mon 2.488e+01 2.278e+01 9.328e-01 2.322e+01 3.882e+01 7.510e-02 5.834e-01 2.600e-03 2.903e+00 1.847e-01 Dic.sp Dic.fus Dic.pol Hyl.spl Ple.sch Pol.pil Pol.jun Pol.com Poh.nut Pti.cil 2.953e+01 8.290e+01 4.601e-01 5.763e+00 3.570e+02 3.774e-03 2.117e+00 4.700e-03 1.031e-02 4.165e+00 Bar.lyc Cla.arb Cla.ran Cla.ste Cla.unc Cla.coc Cla.cor Cla.gra Cla.fim Cla.cri 3.734e-01 1.124e+02 2.223e+02 8.595e+02 2.468e+01 7.885e-03 7.913e-02 1.254e-02 6.478e-03 1.823e-01 Cla.chl Cla.bot Cla.ama Cla.sp Cet.eri Cet.isl Cet.niv Nep.arc Ste.sp Pel.aph 6.797e-03 2.726e-03 3.210e-04 2.693e-03 4.372e-02 2.404e-02 4.149e+00 9.838e-01 4.325e+00 6.867e-03 Ich.eri Cla.cer Cla.def Cla.phy 6.167e-04 1.471e-04 2.595e-01 7.101e-03

- Vegetation data

Show codeWhat combination of standardization and distance matrix would you recommend?

> # environmental variables means > apply(vareenv[, -1], 2, mean, variables.rm = TRUE)

N P K Ca Mg S Al Fe Mn Zn Mo 22.3833 45.0792 162.9292 569.6625 87.4583 37.1917 142.4750 49.6125 49.3292 7.5958 0.3958 Baresoil Humdepth pH 22.9492 2.2000 2.9333

> # environmental variables maximums > apply(vareenv[, -1], 2, max)

N P K Ca Mg S Al Fe Mn Zn Mo 33.1 73.5 313.8 1169.7 209.1 60.2 435.1 204.4 132.0 16.8 1.1 Baresoil Humdepth pH 56.9 3.8 3.6> # variable sums > apply(vareenv[, -1], 2, sum, na.rm = TRUE)

N P K Ca Mg S Al Fe Mn Zn Mo 537.2 1081.9 3910.3 13671.9 2099.0 892.6 3419.4 1190.7 1183.9 182.3 9.5 Baresoil Humdepth pH 550.8 52.8 70.4

> # environmental variables variance > apply(vareenv[, -1], 2, var, na.rm = TRUE)

N P K Ca Mg S Al Fe Mn Zn 3.056e+01 2.234e+02 4.205e+03 5.933e+04 1.682e+03 1.361e+02 1.496e+04 3.654e+03 1.150e+03 8.904e+00 Mo Baresoil Humdepth pH 5.759e-02 2.703e+02 4.417e-01 4.580e-02

- Vegetation data

- Actually there are not incorrect answers to the above questions. Each combination of standardizations and distance matrices will emphasize

a different aspect of the multivariate data sets. Indeed one of the attractions of Q-mode analyses is this very flexibility.

Perform the standardizations/distance matrices as indicated above.

Show code

> library(vegan) > # species > vareveg.std <- wisconsin(vareveg[, -1]) > vareveg.dist <- vegdist(vareveg.std, "bray") > # environmental variables > vareenv.std <- decostand(vareenv[, -1], "standardize") > vareenv.dist <- vegdist(vareenv.std, "euc")

- Perform a Mantel test to calculate the correlation between the two matricies (vegetation and soil).

Show code

> library(vegan) > mantel(vareveg.dist, vareenv.dist)

Mantel statistic based on Pearson's product-moment correlation Call: mantel(xdis = vareveg.dist, ydis = vareenv.dist) Mantel statistic r: 0.427 Significance: 0.001 Empirical upper confidence limits of r: 90% 95% 97.5% 99% 0.161 0.205 0.248 0.285 Based on 999 permutations- What was the R value? Show code

> library(vegan) > mantel(vareveg.dist, vareenv.dist)$statistic

[1] 0.4268

- Was this significant?

- What was the R value?

- Generate a correlogram (mutivariate correlation plot)

Show code

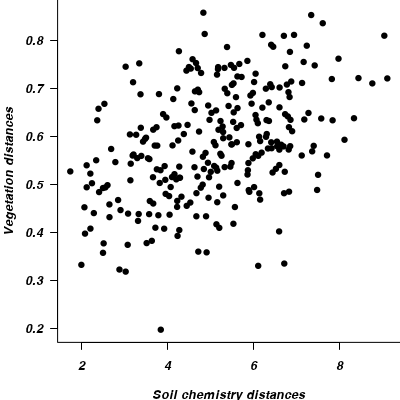

> par(mar = c(4, 4, 0, 0)) > plot(vareenv.dist, vareveg.dist, ann = F, axes = F, type = "n") > points(vareenv.dist, vareveg.dist, pch = 16) > axis(1) > axis(2, las = 1) > mtext("Vegetation distances", 2, line = 3) > mtext("Soil chemistry distances", 1, line = 3) > box(bty = "l")

Adonis - Permutational Multivariate analysis of variance

In the previous question we established that there was an association between the vegetation community and the soil chemistry. In this question we are going to delve a little deeper into that association. Now there are numerous ways that we could do this, so we are going to just focus on two of those.

- perform a permutational multivariate analysis of variance (adonis) of the vegetation community against concentrations of certain soil chemicals

- perform a permutational multivariate analysis of variance (adonis) of the vegetation community against the PCA axes scores from the soil chemistry data

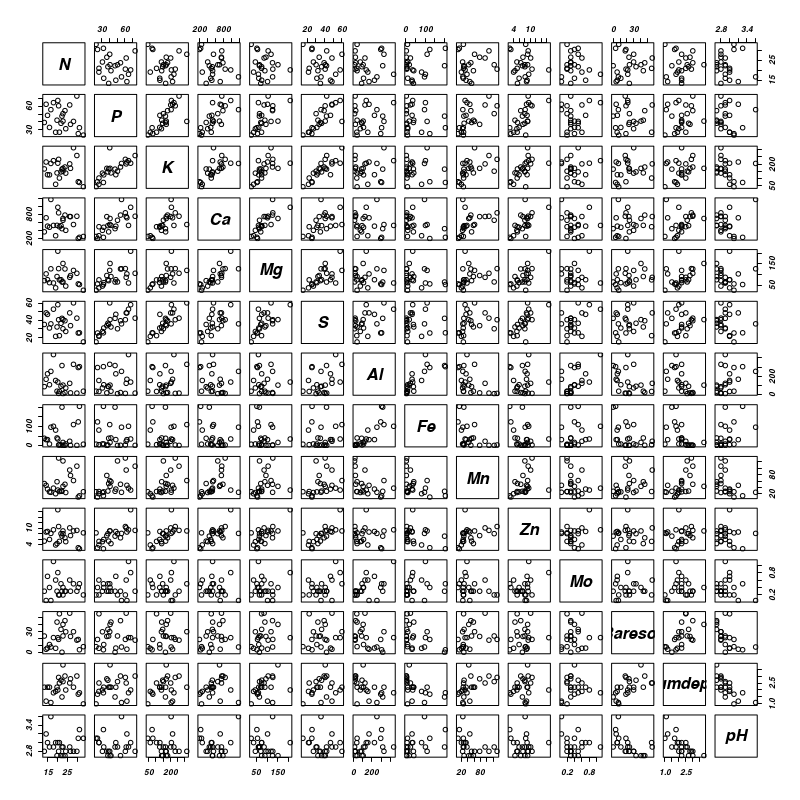

- Since the permulational multivariate analysis of variance partitions variance sequentially, it is important that all predictors are uncorrelated to one another (

or the effects of those later in the model will be under-estimated) - the (multi)collinearity issue.

Lets start by exploring the relationships amongst the soil chemistry variables

Show codeClearly Phosphorus, Potassium, Calcium, Magnessium, Sulfure and Zinc are correlated to one another and thus cannot all be in the model. Similarly, Iron, Aluminium, percent of baresoil, pH and humus depth are correlated to each other.> pairs(vareenv[, -1])

- We might

elect to include one as a representative from each of these groups along with Nitrogen in a model. Ideally we would base our selection on some sort of

meaningful criteria. In the absence of anything even vaguely sensible around here, we will try the combination of Nitrogen, Calcium and Iron. Lets try it.

Show code

> adonis(vareveg.dist ~ N + Ca + Fe, data = vareenv)Call: adonis(formula = vareveg.dist ~ N + Ca + Fe, data = vareenv) Terms added sequentially (first to last) Df SumsOfSqs MeanSqs F.Model R2 Pr(>F) N 1 0.27 0.267 1.70 0.065 0.045 * Ca 1 0.38 0.381 2.42 0.093 0.004 ** Fe 1 0.30 0.303 1.93 0.074 0.032 * Residuals 20 3.14 0.157 0.768 Total 23 4.09 1.000 --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 - The alternative approach introduced earlier is to create a triplet of soil chemistry predictors from PCA. Lets have a try

Show codeNote this has not necessarily told us a great deal more. All we have established is that there may well be three important gradients underlying the chemical ecosystem and that these many influence the vegetation communities.

> vareenv.pca <- rda(vareenv[, -1], scale = TRUE) > adonis(vareveg.dist ~ scores(vareenv.pca, 1)$sites + scores(vareenv.pca, 2)$sites + scores(vareenv.pca, + 3)$sites)

Call: adonis(formula = vareveg.dist ~ scores(vareenv.pca, 1)$sites + scores(vareenv.pca, 2)$sites + scores(vareenv.pca, 3)$sites) Terms added sequentially (first to last) Df SumsOfSqs MeanSqs F.Model R2 Pr(>F) scores(vareenv.pca, 1)$sites 1 0.46 0.461 3.09 0.113 0.001 *** scores(vareenv.pca, 2)$sites 1 0.41 0.406 2.72 0.099 0.001 *** scores(vareenv.pca, 3)$sites 1 0.25 0.249 1.67 0.061 0.064 . Residuals 20 2.98 0.149 0.728 Total 23 4.09 1.000 --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Permutational Multivariate analysis of variance

Jongman et al. (1987) presented a data set from a study in which the cover abundance of 30 plant species were measured on 20 rangeland dune sites. They also indicated what the form of management each site experienced (either biological farming, hobby farming, nature conservation management or standard farming. The major intension of the study was to determine whether the vegetation communities differed between the alternative management practices.

Download dune data set| Format of dune.csv data file | |||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

> dune <- read.csv("../downloads/data/dune.csv") > dune

MANAGEMENT Belper Empnig Junbuf Junart Airpra Elepal Rumace Viclat Brarut Ranfla Cirarv Hyprad 1 BF 3 0 0 0 0 0 0 0 0 0 0 0 2 SF 0 0 3 0 0 0 0 0 0 2 0 0 3 SF 2 0 0 0 0 0 0 0 2 0 2 0 4 SF 0 0 0 3 0 8 0 0 4 2 0 0 5 HF 0 0 0 0 0 0 6 0 6 0 0 0 6 SF 0 0 0 0 0 0 0 0 0 0 0 0 7 HF 0 0 0 4 0 4 0 0 2 2 0 0 8 HF 2 0 0 0 0 0 5 0 2 0 0 0 9 NM 0 0 0 0 2 0 0 0 0 0 0 2 10 NM 0 0 0 3 0 5 0 0 4 2 0 0 11 BF 2 0 0 0 0 0 0 1 2 0 0 0 12 BF 0 0 0 0 0 0 0 2 4 0 0 2 13 HF 0 0 4 4 0 0 2 0 2 0 0 0 14 NM 2 0 0 0 0 0 0 1 6 0 0 0 15 SF 2 0 0 0 0 0 0 0 2 0 0 0 16 NM 0 0 0 4 0 4 0 0 4 4 0 0 17 NM 0 0 0 0 0 4 0 0 0 2 0 0 18 NM 0 2 0 0 3 0 0 0 3 0 0 5 19 SF 0 0 4 0 0 0 2 0 4 0 0 0 20 HF 0 0 2 0 0 0 3 0 2 0 0 0 Leoaut Potpal Poapra Calcus Tripra Trirep Antodo Salrep Achmil Poatri Chealb Elyrep Sagpro 1 5 0 4 0 0 5 0 0 3 7 0 4 0 2 2 0 2 0 0 2 0 0 0 9 1 0 2 3 2 0 4 0 0 1 0 0 0 5 0 4 5 4 0 0 0 3 0 0 0 0 0 2 0 0 0 5 3 0 3 0 5 5 3 0 2 4 0 0 0 6 0 0 4 0 0 0 0 0 1 2 0 4 0 7 3 0 4 0 0 2 0 0 0 4 0 0 2 8 3 0 2 0 2 2 4 0 2 6 0 4 0 9 2 0 1 0 0 0 4 0 2 0 0 0 0 10 2 2 0 0 0 1 0 0 0 0 0 0 0 11 3 0 4 0 0 6 4 0 4 4 0 0 0 12 5 0 4 0 0 3 0 0 0 0 0 0 2 13 2 0 4 0 0 3 0 0 0 5 0 6 2 14 5 0 3 0 0 2 0 3 0 0 0 0 0 15 2 0 5 0 0 2 0 0 0 6 0 4 0 16 2 0 0 3 0 0 0 5 0 0 0 0 0 17 2 2 0 4 0 6 0 0 0 0 0 0 0 18 6 0 0 0 0 2 4 3 0 0 0 0 3 19 2 0 0 0 0 3 0 0 0 4 0 0 4 20 3 0 4 0 2 2 2 0 2 5 0 0 0 Plalan Agrsto Lolper Alogen Brohor 1 0 0 5 2 4 2 0 5 0 5 0 3 0 8 5 2 3 4 0 7 0 4 0 5 5 0 6 0 0 6 0 0 7 0 0 7 0 4 4 5 0 8 5 0 2 0 2 9 2 0 0 0 0 10 0 4 0 0 0 11 3 0 6 0 4 12 3 0 7 0 0 13 0 3 2 3 0 14 3 0 2 0 0 15 0 4 6 7 0 16 0 5 0 0 0 17 0 4 0 0 0 18 0 0 0 0 0 19 0 4 0 8 0 20 5 0 6 0 2

-

Briefly explore the dune data set and determine the appropriate sort of standardization and distance index

Show codeWhilst the means, maximums and variances are mostly fairly similar, there are some species such as Chealb and Empnig that are an order of magnitude less abundant and variable than the bulk of the species. Therefore a Wisconsin double standardization would still be appropriate. A Bray-Curtis dissimilarity would thence be a good choice.

> # species means > apply(dune[, -1], 2, mean, na.rm = TRUE)

Belper Empnig Junbuf Junart Airpra Elepal Rumace Viclat Brarut Ranfla Cirarv Hyprad Leoaut Potpal 0.65 0.10 0.65 0.90 0.25 1.25 0.90 0.20 2.45 0.70 0.10 0.45 2.70 0.20 Poapra Calcus Tripra Trirep Antodo Salrep Achmil Poatri Chealb Elyrep Sagpro Plalan Agrsto Lolper 2.40 0.50 0.45 2.35 1.05 0.55 0.80 3.15 0.05 1.30 1.00 1.30 2.40 2.90 Alogen Brohor 1.80 0.75

> # species maximums > apply(dune[, -1], 2, max)

Belper Empnig Junbuf Junart Airpra Elepal Rumace Viclat Brarut Ranfla Cirarv Hyprad Leoaut Potpal 3 2 4 4 3 8 6 2 6 4 2 5 6 2 Poapra Calcus Tripra Trirep Antodo Salrep Achmil Poatri Chealb Elyrep Sagpro Plalan Agrsto Lolper 5 4 5 6 4 5 4 9 1 6 5 5 8 7 Alogen Brohor 8 4> # species sums > apply(dune[, -1], 2, sum, na.rm = TRUE)

Belper Empnig Junbuf Junart Airpra Elepal Rumace Viclat Brarut Ranfla Cirarv Hyprad Leoaut Potpal 13 2 13 18 5 25 18 4 49 14 2 9 54 4 Poapra Calcus Tripra Trirep Antodo Salrep Achmil Poatri Chealb Elyrep Sagpro Plalan Agrsto Lolper 48 10 9 47 21 11 16 63 1 26 20 26 48 58 Alogen Brohor 36 15> # species variance > apply(dune[, -1], 2, var, na.rm = TRUE)

Belper Empnig Junbuf Junart Airpra Elepal Rumace Viclat Brarut Ranfla Cirarv Hyprad Leoaut Potpal 1.0816 0.2000 1.9237 2.6211 0.6184 5.5658 3.2526 0.2737 3.6289 1.3789 0.2000 1.5237 2.4316 0.3789 Poapra Calcus Tripra Trirep Antodo Salrep Achmil Poatri Chealb Elyrep Sagpro Plalan Agrsto Lolper 3.4105 1.5263 1.5237 3.6079 2.8921 1.9447 1.5368 7.9237 0.0500 4.3263 2.4211 3.8000 7.2000 7.9895 Alogen Brohor 6.9053 1.9868

- Perform the aforementioned standardization and distance measure procedures.

Show code

> library(vegan) > dune.dist <- vegdist(wisconsin(dune[, -1]), "bray")

- Now perform the permutational multivariate analysis of variance relating the dune vegetation communities to the management practices.

Show code

> dune.adonis <- adonis(dune.dist ~ dune[, 1]) > dune.adonisCall: adonis(formula = dune.dist ~ dune[, 1]) Terms added sequentially (first to last) Df SumsOfSqs MeanSqs F.Model R2 Pr(>F) dune[, 1] 3 1.42 0.473 2.31 0.302 0.003 ** Residuals 16 3.28 0.205 0.698 Total 19 4.70 1.000 --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 -

The above analysis indicates that the dune vegetation communities do indeed differ between the different management practices. However, we may wish

to explore whether the alternative farming practices (BF: biological farming, HF: hobby farming, and NM: natural conservation management) each differ in communities

from the standard farming practices (SF).

We can do this by generating dummy codes for the managemnet variable and defining treatment contrasts relative to the SF level.

Show code> management <- factor(dune$MANAGEMENT, levels = c("SF", "BF", "HF", "NM")) > mm <- model.matrix(~management) > colnames(mm) <- gsub("management", "", colnames(mm)) > mm <- data.frame(mm) > dune.adonis <- adonis(dune.dist ~ BF + HF + NM, data = mm) > dune.adonis

Call: adonis(formula = dune.dist ~ BF + HF + NM, data = mm) Terms added sequentially (first to last) Df SumsOfSqs MeanSqs F.Model R2 Pr(>F) BF 1 0.33 0.334 1.63 0.071 0.129 HF 1 0.38 0.376 1.84 0.080 0.083 . NM 1 0.71 0.709 3.47 0.151 0.009 ** Residuals 16 3.28 0.205 0.698 Total 19 4.70 1.000 --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Permutational multivariate analysis of variance

Mac Nally (1989) studied geographic variation in forest bird communities. His data set consists of the maximum abundance for 102 bird species from 37 sites that where further classified into five different forest types (Gippsland manna gum, montane forest, woodland, box-ironbark and river redgum and mixed forest). He was primarily interested in determining whether the bird assemblages differed between forest types.

In Tutorial 15.1 we explored the bird communities using non-metric multidimensional scaling. We then overlay the environmental habitat variable with a permutation test that explored the association of the habitat levels with the ordination axes. That is, we investigate the degree to which a treatment (habitat level) aligns with the axes.

Alternatively, we could use a multivariate analysis of variance approach to investigate the effects of habitat on the bird community structure.

Download the macnally data set| Format of macnally_full.csv data file | |||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

> macnally <- read.csv("../downloads/data/macnally_full.csv") > macnally

HABITAT GST EYR GF BTH GWH WTTR WEHE WNHE SFW WBSW CR LK RWB AUR

Reedy Lake Mixed 3.4 0.0 0.0 0.0 0.0 0.0 0.0 11.9 0.4 0.0 1.1 3.8 9.7 0.0

Pearcedale Gipps.Manna 3.4 9.2 0.0 0.0 0.0 0.0 0.0 11.5 8.3 12.6 0.0 0.5 11.6 0.0

Warneet Gipps.Manna 8.4 3.8 0.7 2.8 0.0 0.0 10.7 12.3 4.9 10.7 0.0 1.9 16.6 2.3

Cranbourne Gipps.Manna 3.0 5.0 0.0 5.0 2.0 0.0 3.0 10.0 6.9 12.0 0.0 2.0 11.0 1.5

Lysterfield Mixed 5.6 5.6 12.9 12.2 9.5 2.1 7.9 28.6 9.2 5.0 19.1 3.6 5.7 8.8

Red Hill Mixed 8.1 4.1 10.9 24.5 5.6 6.7 9.4 6.7 0.0 8.9 12.1 6.7 2.7 0.0

Devilbend Mixed 8.3 7.1 6.9 29.1 4.2 2.0 7.1 27.4 13.1 2.8 0.0 2.8 2.4 2.8

Olinda Mixed 4.6 5.3 11.1 28.2 3.9 6.5 2.6 10.9 3.1 8.6 9.3 3.8 0.6 1.3

Fern Tree Gum Montane Forest 3.2 5.2 8.3 18.2 3.8 4.2 2.8 9.0 3.8 5.6 14.1 3.2 0.0 0.0

Sherwin Foothills Woodland 4.6 1.2 4.6 6.5 2.3 5.2 0.6 3.6 3.8 3.0 7.5 2.4 0.6 0.0

Heathcote Ju Montane Forest 3.7 2.5 6.3 24.9 2.8 7.4 1.3 4.7 5.5 9.5 5.7 2.9 0.0 1.8

Warburton Montane Forest 3.8 6.5 11.1 36.1 6.2 8.5 2.3 25.4 8.2 5.9 10.5 3.1 9.8 1.6

Millgrove Mixed 5.4 6.5 11.9 19.6 3.3 8.6 2.5 11.9 4.3 5.4 10.8 6.5 2.7 2.0

Ben Cairn Mixed 3.1 9.3 11.1 25.9 9.3 8.3 2.8 2.8 2.8 8.3 18.5 3.1 0.0 3.1

Panton Gap Montane Forest 3.8 3.8 10.3 34.6 7.9 4.8 2.9 3.7 4.8 7.2 5.9 3.1 0.6 3.8

OShannassy Mixed 9.6 4.0 5.4 34.9 7.0 5.1 2.6 6.4 3.9 11.3 11.6 2.3 0.0 2.3

Ghin Ghin Mixed 3.4 2.7 9.1 16.1 1.3 3.2 4.7 0.0 22.0 5.8 7.4 4.5 0.0 0.0

Minto Mixed 5.6 3.3 13.3 28.0 7.0 8.3 7.0 38.9 10.5 7.0 14.0 5.2 1.7 5.2

Hawke Mixed 1.7 2.6 5.5 16.0 4.3 6.7 3.5 5.9 6.7 10.0 3.7 2.1 0.5 1.5

St Andrews Foothills Woodland 4.7 3.6 6.0 25.2 3.7 7.5 4.7 10.0 0.0 0.0 4.0 5.1 2.8 3.7

Nepean Foothills Woodland 14.0 5.6 5.5 20.0 3.0 6.6 7.0 3.3 7.0 10.0 4.7 3.3 2.1 3.7

Cape Schanck Mixed 6.0 4.9 4.9 16.2 3.4 2.6 2.8 9.4 6.6 7.8 5.1 5.2 21.3 0.0

Balnarring Mixed 4.1 4.9 10.7 21.2 3.9 0.0 5.1 2.9 12.1 6.1 0.0 2.7 0.0 0.0

Bittern Gipps.Manna 6.5 9.7 7.8 14.4 5.2 0.0 11.5 12.5 20.7 4.9 0.0 0.0 16.1 5.2

Bailieston Box-Ironbark 6.5 2.5 5.1 5.6 4.3 5.7 6.2 6.2 1.2 0.0 0.0 1.6 5.0 4.1

Donna Buang Mixed 1.5 0.0 2.2 9.6 6.7 3.0 8.1 0.0 0.0 7.3 8.1 1.5 2.2 0.7

Upper Yarra Mixed 4.7 3.1 7.0 17.1 8.3 12.8 1.3 6.4 2.3 5.4 5.4 2.4 0.6 2.3

Gembrook Mixed 7.5 7.5 12.7 16.4 4.7 6.4 1.6 8.9 9.3 6.4 4.8 3.6 14.5 4.7

Arcadia River Red Gum 3.1 0.0 1.2 0.0 1.2 0.0 0.0 1.8 0.7 0.0 0.0 1.8 0.0 2.5

Undera River Red Gum 2.7 0.0 2.2 0.0 1.3 6.5 0.0 0.0 6.5 0.0 0.0 0.0 0.0 2.2

Coomboona River Red Gum 4.4 0.0 2.1 0.0 0.0 3.3 0.0 0.0 0.8 0.0 0.0 2.8 0.0 2.2

Toolamba River Red Gum 3.0 0.0 0.5 0.0 0.8 0.0 0.0 0.0 1.6 0.0 0.0 2.0 0.0 2.5

Rushworth Box-Ironbark 2.1 1.1 3.2 1.8 0.5 4.8 0.9 5.3 4.8 0.0 1.1 1.1 26.3 1.6

Sayers Box-Ironbark 2.6 0.0 1.1 7.5 1.6 5.2 3.6 6.9 6.7 0.0 2.7 1.6 8.0 1.6

Waranga Mixed 3.0 1.6 1.5 3.0 0.0 3.0 0.0 14.5 6.7 0.0 0.7 4.0 23.0 1.6

Costerfield Box-Ironbark 7.1 2.2 4.5 9.0 2.7 6.0 2.5 7.7 9.5 0.0 7.7 2.2 8.9 1.9

Tallarook Foothills Woodland 4.3 2.9 8.7 14.4 2.9 5.8 2.8 11.1 2.9 0.0 3.8 2.9 2.9 1.9

STTH LR WPHE YTH ER PCU ESP SCR RBFT BFCS WAG WWCH NHHE VS CST BTR AMAG SCC

Reedy Lake 0.0 4.8 27.3 0.0 5.1 0.0 0.0 0.0 0.0 0.6 1.9 0.0 0.0 0.0 1.7 12.5 8.6 12.5

Pearcedale 0.0 3.7 27.6 0.0 2.7 0.0 3.7 0.0 1.1 1.1 3.4 0.0 6.9 0.0 0.9 0.0 0.0 0.0

Warneet 2.8 5.5 27.5 0.0 5.3 0.0 0.0 0.0 0.0 1.5 2.1 0.0 3.0 0.0 1.5 0.0 0.0 0.0

Cranbourne 0.0 11.0 20.0 0.0 2.1 0.0 2.0 0.0 5.0 1.4 3.4 0.0 32.0 0.0 1.4 0.0 0.0 0.0

Lysterfield 7.0 1.6 0.0 0.0 1.4 0.0 3.5 0.7 0.0 2.7 0.0 0.0 6.4 0.0 0.0 0.0 0.0 0.0

Red Hill 16.8 3.4 0.0 0.0 2.2 0.0 3.4 0.0 0.7 2.0 0.0 0.0 2.2 5.4 0.0 0.0 0.0 0.0

Devilbend 13.9 0.0 16.7 0.0 0.0 0.0 5.5 0.0 0.0 3.6 0.0 0.0 5.6 5.6 4.6 0.0 0.0 0.0

Olinda 10.2 0.0 0.0 0.0 1.2 0.0 5.1 0.0 0.7 0.0 0.0 0.0 0.0 1.9 0.0 0.0 0.0 0.0

Fern Tree Gum 12.2 0.6 0.0 0.0 1.3 2.8 7.1 0.0 1.9 0.6 0.0 0.0 0.0 4.2 0.0 0.0 0.0 0.0

Sherwin 11.3 5.8 0.0 9.6 2.3 2.9 0.6 3.0 0.0 1.2 0.0 9.8 0.0 5.1 0.0 0.0 0.0 0.0

Heathcote Ju 12.0 0.0 0.0 0.0 0.0 2.8 0.9 2.6 0.0 0.0 0.0 11.7 0.0 0.0 0.0 0.0 0.0 0.0

Warburton 7.6 15.0 0.0 0.0 0.0 1.8 7.6 0.0 0.9 1.5 0.0 0.0 0.0 3.9 2.5 0.0 0.0 0.0

Millgrove 8.6 0.0 0.0 0.0 6.5 2.5 5.4 2.0 5.4 2.2 0.0 0.0 0.0 5.4 3.2 0.0 0.0 0.0

Ben Cairn 12.0 3.3 0.0 0.0 0.0 2.5 7.4 0.0 0.0 0.0 0.0 0.0 2.1 0.0 0.0 0.0 0.0 0.0

Panton Gap 17.3 2.4 0.0 0.0 0.0 3.1 9.2 0.0 3.7 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

OShannassy 7.8 0.0 0.0 0.0 0.0 1.5 3.1 0.0 9.6 0.7 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Ghin Ghin 8.1 2.7 8.4 8.4 3.4 0.0 0.0 0.0 44.7 0.4 1.3 0.0 0.4 0.6 0.6 0.0 8.4 47.6

Minto 25.2 0.0 15.4 0.0 0.0 0.0 3.3 0.0 10.5 0.0 0.0 0.0 0.0 0.0 3.5 0.0 6.7 80.5

Hawke 9.0 4.8 0.0 0.0 0.0 2.1 3.7 0.0 0.0 0.7 0.0 3.2 0.0 0.0 1.5 0.0 0.0 0.0

St Andrews 15.8 3.4 0.0 9.0 0.0 3.7 5.6 4.0 0.9 0.0 0.0 10.0 0.0 0.0 2.7 0.0 0.0 0.0

Nepean 12.0 2.2 0.0 0.0 3.7 0.0 4.0 2.0 0.0 1.1 0.0 0.0 1.1 4.5 0.0 0.0 0.0 0.0

Cape Schanck 0.0 4.3 0.0 0.0 6.4 0.0 4.6 0.0 3.4 0.0 0.0 0.0 33.3 0.0 0.0 0.0 0.0 0.0

Balnarring 4.9 16.5 0.0 0.0 9.1 0.0 3.9 0.0 2.7 1.0 0.0 0.0 4.9 10.1 0.0 0.0 0.0 0.0

Bittern 0.0 0.0 27.7 0.0 2.3 0.0 0.0 0.0 2.3 2.3 6.3 0.0 2.6 0.0 1.3 0.0 0.0 0.0

Bailieston 9.8 0.0 0.0 8.7 0.0 0.0 0.0 6.2 0.0 1.6 0.0 10.0 0.0 2.5 0.0 0.0 0.0 0.0

Donna Buang 5.2 0.0 0.0 0.0 0.0 1.5 7.4 0.0 0.0 1.5 0.0 0.0 0.0 0.0 1.5 0.0 0.0 0.0

Upper Yarra 6.4 0.0 0.0 0.0 0.0 1.3 2.3 0.0 6.4 0.9 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Gembrook 24.3 2.4 0.0 0.0 3.6 0.0 26.6 4.7 2.8 0.0 0.0 0.0 4.8 9.7 0.0 0.0 0.0 0.0

Arcadia 0.0 2.7 27.6 0.0 4.3 3.7 0.0 0.0 0.6 2.1 4.9 8.0 0.0 0.0 1.8 6.7 3.1 24.0

Undera 7.5 3.1 13.5 11.5 2.0 0.0 0.0 0.0 0.0 1.9 2.5 6.5 0.0 3.8 0.0 4.0 3.2 16.0

Coomboona 3.1 1.7 13.9 5.6 4.6 1.1 0.0 0.0 0.0 6.9 3.3 5.6 0.0 3.3 1.0 4.2 5.4 30.4

Toolamba 0.0 2.5 16.0 0.0 5.0 0.0 0.0 0.0 0.8 3.0 3.5 5.0 0.0 0.0 0.8 7.0 3.7 29.9

Rushworth 3.2 0.0 0.0 10.7 3.2 0.0 0.0 1.1 2.7 1.1 0.0 9.6 0.0 2.7 0.0 0.0 0.0 0.0

Sayers 7.5 2.7 0.0 20.2 1.1 0.0 0.0 2.6 0.0 0.5 0.0 5.6 0.0 0.0 0.0 0.0 0.0 0.0

Waranga 0.0 8.9 25.3 2.2 3.4 0.0 0.0 0.0 10.9 1.6 2.4 8.9 0.0 0.0 0.7 5.5 2.7 0.0

Costerfield 9.3 1.1 0.0 15.8 1.1 0.0 0.0 5.5 0.0 1.3 0.0 5.7 0.0 3.3 1.1 5.5 0.0 0.0

Tallarook 4.6 10.3 0.0 2.9 0.0 0.0 5.8 5.6 0.0 1.5 0.0 2.8 0.0 3.8 3.4 0.0 0.0 0.0

RWH WSW STP YFHE WHIP GAL FHE BRTH SPP SIL GCU MUSK MGLK BHHE RFC YTBC LYRE CHE

Reedy Lake 0.6 0.0 4.8 0.0 0.0 4.8 26.2 0.0 0.0 0.0 0.0 13.1 1.7 1.1 0.0 0.0 0.0 0.0

Pearcedale 2.3 5.7 0.0 1.1 0.0 0.0 0.0 0.0 1.1 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Warneet 1.4 24.3 3.1 11.7 0.0 0.0 0.0 0.0 4.6 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Cranbourne 0.0 10.0 4.0 0.0 0.0 2.8 0.0 0.0 0.8 0.0 0.0 0.0 1.4 0.0 0.0 0.0 0.0 0.0

Lysterfield 7.0 0.0 0.0 6.1 0.0 0.0 0.0 0.0 5.4 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Red Hill 6.8 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3.4 2.7 1.4 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Devilbend 7.3 3.6 2.4 0.0 0.0 0.0 0.0 0.0 0.0 1.2 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Olinda 9.1 0.0 0.0 0.0 2.0 0.0 0.0 0.0 2.0 0.0 2.6 0.0 0.0 0.0 0.0 1.9 0.0 0.0

Fern Tree Gum 4.5 0.0 0.0 0.0 3.2 0.0 0.0 0.0 2.6 4.9 1.3 0.0 0.0 0.0 0.0 2.6 0.6 0.6

Sherwin 6.3 0.0 3.5 2.3 0.0 0.0 0.0 6.0 4.2 1.2 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Heathcote Ju 5.9 0.0 4.4 4.7 0.0 0.0 0.0 0.0 3.7 2.5 1.5 0.0 0.0 0.0 0.0 1.8 0.0 0.0

Warburton 0.0 0.0 2.7 6.2 3.3 0.0 0.0 0.0 6.2 4.6 5.7 0.0 0.0 7.4 0.0 3.9 1.4 0.0

Millgrove 8.8 0.0 2.2 5.4 2.6 0.0 0.0 0.0 5.3 3.2 1.1 0.0 0.0 0.9 0.0 1.9 0.0 2.2

Ben Cairn 0.0 0.0 0.0 0.9 3.7 0.0 0.0 0.0 3.7 12.0 2.1 0.0 0.0 0.0 0.0 3.7 0.0 5.6

Panton Gap 0.0 0.0 1.2 4.9 3.8 0.0 0.0 0.0 1.9 2.4 2.9 0.0 0.0 7.7 0.0 0.0 2.4 1.8

OShannassy 3.9 0.0 3.1 8.5 2.9 0.0 0.0 0.0 2.2 3.7 0.0 0.0 0.0 0.0 0.0 1.5 1.6 8.1

Ghin Ghin 6.1 4.7 1.2 6.7 0.0 0.0 0.0 0.0 4.5 6.7 0.0 0.0 2.7 0.0 1.3 0.0 0.0 0.0

Minto 5.0 0.0 5.0 26.7 0.0 0.0 0.0 0.0 5.0 17.5 0.0 0.0 0.0 0.0 2.8 0.0 0.0 0.0

Hawke 4.2 0.0 0.0 3.2 0.0 0.0 0.0 5.2 3.7 0.0 0.0 0.0 0.0 0.5 0.0 0.0 1.7 0.0

St Andrews 8.4 0.0 5.1 5.0 0.0 0.0 0.0 10.0 5.1 0.9 3.4 0.0 0.0 1.0 0.0 0.0 0.0 0.0

Nepean 3.3 0.0 0.0 1.0 0.0 0.0 0.0 0.0 4.7 1.1 0.0 0.0 0.0 5.0 0.0 0.0 0.0 5.6

Cape Schanck 2.6 0.0 0.0 3.4 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 5.2

Balnarring 4.9 0.0 0.0 1.9 0.0 0.0 0.0 0.0 0.0 10.3 0.0 0.0 0.0 3.9 0.0 0.0 0.0 0.0

Bittern 0.0 12.5 2.3 19.5 0.0 0.0 0.0 0.0 3.5 8.0 0.0 0.0 0.0 2.3 0.0 0.0 0.0 0.0

Bailieston 7.3 0.0 0.0 1.1 0.0 0.0 1.2 0.0 2.8 0.8 0.0 0.0 0.0 14.1 0.0 0.0 0.0 0.0

Donna Buang 0.0 0.0 2.2 0.0 3.7 0.0 0.0 0.0 3.7 4.4 4.4 0.0 0.0 0.0 0.0 3.6 3.0 1.5

Upper Yarra 7.0 0.0 6.4 3.9 0.9 0.0 0.0 0.0 3.9 2.3 0.0 0.0 0.0 0.6 0.0 0.8 0.9 0.9

Gembrook 10.9 0.0 0.0 20.2 0.0 0.0 0.0 0.0 4.5 1.8 1.8 0.0 0.0 5.6 0.0 5.5 0.0 11.2

Arcadia 0.0 2.7 8.2 0.0 0.0 4.1 0.0 0.0 0.0 9.8 0.0 0.0 3.7 0.0 4.3 0.0 0.0 0.0

Undera 1.5 1.0 8.7 0.0 0.0 8.6 0.0 0.0 0.0 0.0 0.0 0.0 1.6 0.0 1.5 0.0 0.0 0.0

Coomboona 1.1 0.0 8.1 0.0 0.0 5.4 0.0 0.0 0.0 0.0 0.0 0.0 2.3 0.0 2.6 0.0 0.0 0.0

Toolamba 0.0 6.0 4.5 0.0 0.0 7.8 0.0 0.0 0.0 0.0 0.0 0.0 1.6 0.0 2.0 0.0 0.0 0.0

Rushworth 4.3 0.0 1.1 14.4 0.0 0.0 9.6 11.7 3.2 2.7 1.1 16.0 0.0 9.9 0.0 0.0 0.0 0.0

Sayers 3.7 0.0 0.0 8.0 0.0 0.0 3.1 9.1 5.7 3.7 1.6 3.1 0.0 7.8 0.0 0.0 0.0 0.0

Waranga 1.4 3.4 2.7 16.3 0.0 5.9 14.8 0.0 2.2 0.0 0.0 20.0 0.0 8.1 2.7 0.0 0.0 0.0

Costerfield 6.2 0.0 6.6 0.6 0.0 0.0 15.9 13.9 6.3 3.9 1.6 3.8 0.0 10.8 0.0 0.0 0.0 0.0

Tallarook 9.5 0.0 1.9 5.8 0.0 0.0 0.0 30.6 8.3 0.0 0.9 0.0 0.0 2.3 0.0 0.0 0.0 0.0

OWH TRM MB STHR LHE FTC PINK OBO YR LFB SPW RBTR DWS BELL LWB CBW GGC PIL SKF

Reedy Lake 0.0 15.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.9 0.0 0.0 0.4 0.0 0.0 0.0 0.0 0.0 1.9

Pearcedale 0.0 0.0 0.0 0.0 0.0 2.3 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Warneet 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3.5 0.0 0.0 0.0 0.0 0.0 0.0

Cranbourne 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 5.5 0.0 4.0 0.0 0.0 0.0 0.0

Lysterfield 0.0 0.0 0.0 0.0 0.0 2.1 0.0 1.4 0.0 0.0 0.0 0.0 0.0 22.1 0.0 0.0 0.0 0.0 0.0

Red Hill 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Devilbend 0.0 0.0 3.6 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.8 0.0 0.0 0.0 0.0 0.0 0.0

Olinda 0.0 0.0 0.0 0.0 0.0 2.6 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Fern Tree Gum 0.0 0.0 0.0 0.0 0.0 2.6 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.3 1.3 0.0

Sherwin 0.0 0.0 1.2 0.0 0.0 1.7 0.0 1.2 0.0 0.0 0.0 0.6 0.0 0.0 0.0 0.6 0.0 0.0 2.3

Heathcote Ju 0.0 0.0 0.0 0.9 0.0 1.6 0.0 0.0 0.0 0.0 0.0 1.6 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Warburton 0.0 0.0 0.0 1.4 2.1 2.1 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.8 0.7 0.0

Millgrove 0.0 0.0 0.0 0.0 0.0 3.5 0.0 0.0 0.0 0.0 0.0 1.1 0.9 0.0 0.0 0.0 0.0 0.0 0.0

Ben Cairn 3.7 0.0 0.0 0.0 4.1 4.6 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3.7 2.8 0.0

Panton Gap 0.6 0.0 0.0 0.9 1.8 3.1 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 4.8 1.8 0.0

OShannassy 0.0 0.0 0.0 0.0 2.4 5.4 2.2 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.2 0.0

Ghin Ghin 0.0 0.0 0.0 0.0 0.0 2.4 0.0 1.2 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.8

Minto 2.8 0.0 0.0 0.0 0.0 1.7 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.7

Hawke 0.0 0.0 0.0 0.0 0.0 1.1 0.0 0.0 0.0 0.0 0.0 1.7 0.0 0.0 0.0 0.0 0.0 0.0 0.0

St Andrews 0.0 0.0 1.7 0.0 0.0 3.4 0.0 0.0 0.0 0.0 0.0 0.0 0.9 15.0 0.0 0.0 0.0 0.0 2.0

Nepean 0.0 0.0 2.2 0.0 0.0 1.9 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Cape Schanck 0.0 0.0 0.0 0.0 0.0 1.7 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 32.2 0.0 0.0 0.0 0.0

Balnarring 0.0 0.0 4.9 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 16.5 1.0 0.0 0.0 0.0

Bittern 0.0 0.0 0.0 0.0 0.0 2.3 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 5.8 0.0 0.0 0.0 0.0

Bailieston 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3.3 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.7 0.0 0.0 0.0

Donna Buang 0.7 0.0 0.0 0.0 2.2 2.2 0.8 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.7 4.4 0.0

Upper Yarra 0.0 0.0 0.0 0.0 0.9 2.3 0.0 0.0 0.0 0.0 0.0 0.0 0.7 0.0 0.0 0.0 0.0 0.0 1.6

Gembrook 0.0 0.0 0.8 2.4 1.9 2.8 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Arcadia 0.0 2.5 0.0 0.0 0.0 0.6 0.0 2.5 0.0 1.4 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.1

Undera 0.0 0.0 0.5 0.0 0.0 0.0 0.0 0.0 3.2 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.1

Coomboona 0.0 0.6 0.0 0.0 0.0 0.0 0.0 0.0 2.6 5.9 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.2

Toolamba 0.0 3.3 0.0 0.0 0.0 0.0 0.0 2.0 0.0 6.6 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.0

Rushworth 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.1 0.0 0.0 1.1 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Sayers 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.5 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.6 0.0 0.0 0.0

Waranga 0.0 4.8 0.0 0.0 0.0 0.0 0.0 2.4 0.0 0.0 0.0 0.0 4.8 0.0 0.0 0.0 0.0 0.0 1.4

Costerfield 0.0 0.0 0.0 0.0 0.0 1.6 0.0 1.1 0.0 0.0 3.3 0.0 0.6 0.0 0.0 0.0 0.0 0.0 0.0

Tallarook 0.0 0.0 0.0 0.0 0.0 2.9 0.0 0.0 0.0 0.0 2.9 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.7

RSL PDOV CRP JW BCHE RCR GBB RRP LLOR YTHE RF SHBC AZKF SFC YRTH ROSE BCOO LFC WG

Reedy Lake 6.7 0.0 0.0 0.0 0.0 0.0 0.0 4.8 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Pearcedale 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Warneet 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.4 0.0 0.0 0.0 0.0 0.0 1.8 0.0

Cranbourne 0.8 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Lysterfield 0.0 0.0 0.0 0.0 0.0 0.0 0.7 0.0 0.0 0.0 0.0 0.7 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Red Hill 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.4 0.0 0.0 3.4 0.0 0.0 0.0 0.0 0.0

Devilbend 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.4 0.0

Olinda 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.9 2.0 0.7 1.2 0.0 0.6 0.0 0.0 0.0

Fern Tree Gum 0.0 0.0 0.0 0.0 0.0 0.0 0.6 0.0 0.0 0.0 3.2 1.3 0.0 1.9 0.0 0.0 0.6 0.0 0.0

Sherwin 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.6 0.0 1.2 0.0 0.0 0.0 0.0 0.0

Heathcote Ju 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.5 0.0 1.6 0.0 0.0 0.0 0.0 0.0

Warburton 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.8 2.1 0.0 4.1 0.0 0.0 0.0 0.0 0.0

Millgrove 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.1 0.0 0.0 7.6 0.0 0.0 0.0 0.0 0.0

Ben Cairn 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 4.6 1.9 0.0 2.8 0.0 1.9 2.8 0.0 0.0

Panton Gap 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.8 3.7 0.0 1.8 0.0 3.1 1.2 0.0 0.0

OShannassy 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.6 1.6 0.0 0.0 0.0 0.7 1.6 0.0 0.0

Ghin Ghin 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.8 0.0 0.0 3.9 0.0 0.0 1.2 0.0

Minto 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Hawke 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

St Andrews 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Nepean 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.2 1.9 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Cape Schanck 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.6 0.9 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Balnarring 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.9 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Bittern 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Bailieston 0.0 0.0 0.0 0.0 0.0 3.3 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Donna Buang 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.2 0.7 0.0 0.7 0.0 1.5 0.0 0.0 0.0

Upper Yarra 0.0 0.0 0.0 0.0 0.0 0.0 0.9 0.0 0.0 0.0 0.0 1.6 0.0 0.8 0.0 0.0 0.0 0.0 0.0

Gembrook 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.8 1.8 0.0 0.0 0.0 0.0 0.0 5.5 0.0

Arcadia 11.0 3.1 1.8 1.2 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.5 0.0

Undera 0.0 0.0 0.0 3.8 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 5.9 0.0 0.0 0.0 3.1

Coomboona 1.1 0.0 1.1 2.8 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.6

Toolamba 5.0 0.4 0.0 0.0 1.2 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Rushworth 1.4 0.0 0.0 0.0 0.0 0.9 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.5

Sayers 1.7 0.0 0.0 0.0 0.0 0.5 0.5 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Waranga 0.0 0.8 0.0 0.0 0.0 0.0 0.8 4.8 10.9 2.7 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Costerfield 0.0 0.0 0.0 0.0 0.0 0.5 0.0 0.0 0.0 6.3 0.0 1.6 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Tallarook 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.9 0.0 0.0 0.0 0.0 0.0 0.0 0.0

PCOO WTG NMIN NFB DB RBEE HBC DF PCL FLAME WWT WBWS LCOR KING

Reedy Lake 1.9 0.0 0.2 0.0 0.0 0.0 0.0 0.0 9.1 0.0 0.0 0.0 0.0 0.0

Pearcedale 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Warneet 0.0 0.0 5.8 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Cranbourne 0.0 0.0 3.1 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Lysterfield 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Red Hill 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Devilbend 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Olinda 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Fern Tree Gum 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Sherwin 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Heathcote Ju 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Warburton 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.8

Millgrove 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Ben Cairn 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Panton Gap 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

OShannassy 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.7

Ghin Ghin 0.0 0.8 0.0 1.8 1.2 0.0 0.0 0.0 0.0 2.6 0.0 0.0 0.0 0.0

Minto 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Hawke 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

St Andrews 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Nepean 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Cape Schanck 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Balnarring 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.9 0.0 0.0 0.0 0.0

Bittern 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Bailieston 0.0 3.1 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Donna Buang 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2.9 0.0 0.0 0.0 0.0

Upper Yarra 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Gembrook 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Arcadia 0.0 0.0 0.0 0.0 1.4 1.4 0.0 0.0 0.0 1.8 2.1 0.0 4.8 0.0

Undera 0.0 3.1 0.0 1.5 0.0 1.0 0.0 0.0 0.0 5.9 0.0 0.0 0.0 0.0

Coomboona 0.0 1.6 5.4 0.0 1.6 0.0 0.0 0.0 0.0 1.7 0.0 0.0 0.0 0.0

Toolamba 0.0 0.0 5.7 0.8 1.5 0.5 0.4 0.0 0.0 0.0 2.5 0.0 0.0 0.0

Rushworth 0.0 0.5 0.0 0.0 0.0 0.0 0.5 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Sayers 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

Waranga 0.0 0.0 1.4 2.4 0.0 0.7 0.0 2.1 0.0 0.0 0.0 1.6 0.0 0.0

Costerfield 0.0 0.0 0.0 0.0 0.0 1.1 0.0 1.1 0.0 0.0 0.0 0.0 0.0 0.0

Tallarook 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3.8 0.0 0.0 0.0 0.0 0.0 0.0

- We will start at the same dissimilarity matrix used in Tutorial 15.1.

That was a Wisconsin double standardization followed by a Bray-Curtis dissimilarity matrix.

Show code

> library(vegan) > macnally.dist <- vegdist(wisconsin(macnally[, c(-1, -2)]), "bray")

- Now rather than explore the relationships over restricted ordination space, we can instead perform a

permutational multivariate analysis of variance.

Show code

> adonis(macnally.dist ~ macnally$HABITAT)Call: adonis(formula = macnally.dist ~ macnally$HABITAT) Terms added sequentially (first to last) Df SumsOfSqs MeanSqs F.Model R2 Pr(>F) macnally$HABITAT 5 3.50 0.699 4.72 0.432 0.001 *** Residuals 31 4.60 0.148 0.568 Total 36 8.09 1.000 --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 - Clearly bird communities differ in different habitats. If we consider mixed forest to be a reference, how do the bird communities

of the other habitat types compare to the mixed forest type

Show code

> habitat <- factor(macnally$HABITAT, levels = c("Mixed", "Box-Ironbark", "Foothills Woodland", "Gipps.Manna", + "Montane Forest", "River Red Gum")) > mm <- model.matrix(~habitat) > colnames(mm) <- gsub("habitat", "", colnames(mm)) > mm <- data.frame(mm) > macnally.adonis <- adonis(macnally.dist ~ Box.Ironbark + Foothills.Woodland + Gipps.Manna + Montane.Forest + + River.Red.Gum, data = mm, contr.unordered = "contr.treat") > macnally.adonis

Call: adonis(formula = macnally.dist ~ Box.Ironbark + Foothills.Woodland + Gipps.Manna + Montane.Forest + River.Red.Gum, data = mm, contr.unordered = "contr.treat") Terms added sequentially (first to last) Df SumsOfSqs MeanSqs F.Model R2 Pr(>F) Box.Ironbark 1 0.70 0.702 4.74 0.087 0.001 *** Foothills.Woodland 1 0.34 0.336 2.27 0.042 0.027 * Gipps.Manna 1 0.72 0.722 4.87 0.089 0.002 ** Montane.Forest 1 0.36 0.363 2.45 0.045 0.018 * River.Red.Gum 1 1.37 1.372 9.25 0.170 0.001 *** Residuals 31 4.60 0.148 0.568 Total 36 8.09 1.000 --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1